Early in a research career, a literature review feels finite. You read enough papers, take enough notes, and eventually arrive at something resembling understanding.

That illusion does not survive contact with a mature field.

Once a domain reaches scale, the problem is no longer coverage. It is coherence. Hundreds or thousands of papers exist, many of them technically competent, some of them influential, and a non-trivial fraction mutually incompatible. The task shifts from finding knowledge to organizing uncertainty.

Senior researchers rarely describe how they do this. Not because the process is secret, but because much of it is tacit. What follows is an attempt to make that tacit process explicit.

Most researchers eventually encounter the same failure mode: reading no longer improves clarity.

You read another paper and gain detail but lose structure. New findings arrive faster than old ones can be integrated. Claims accumulate without being resolved. The literature grows, but understanding plateaus.

This happens because the unit of work has remained the paper, even though the unit of understanding has changed.

Papers are optimized for novelty, not synthesis. They answer narrow questions under specific conditions. Treating them as building blocks for global understanding leads to redundancy, contradiction, and false consensus.

Experienced researchers stop asking “What does this paper say?” and start asking “Where does this fit?”

The first conceptual shift is subtle but decisive: papers are containers, not content.

A single paper may:

Senior researchers extract claims and mentally discard the container.

This does not require formalism. It requires discipline. Claims must be written precisely enough to be challenged, scoped enough to be wrong, and detached enough to be compared across studies.

Once you do this, a surprising amount of literature collapses into repetition.

One of the most common sources of confusion in mature fields is unacknowledged heterogeneity.

Researchers often believe they are disagreeing about results when they are actually disagreeing about:

These fractures accumulate gradually. Terminology remains stable while meaning drifts.

Senior researchers detect this by paying obsessive attention to measurement. Not because measurement is glamorous, but because it is where hidden assumptions live.

Two literatures using the same words but different instruments are not converging. They are passing each other.

Another tacit rule senior researchers internalize is that journal rank cannot do the work of evaluation.

Prestige filters for attention, not for durability. It correlates imperfectly with design quality, measurement validity, and robustness. In some subfields, it correlates negatively with replication likelihood.

When synthesizing evidence, experienced researchers quietly re-rank studies according to criteria that are rarely stated explicitly:

This internal reordering is one of the main differences between novice and senior understanding. The literature looks different once prestige is removed as an organizing principle.

Junior researchers often treat disagreement as failure. Senior researchers treat it as information.

When evidence conflicts, the key question is not “Who is right?” but “Why does this disagreement exist?”

Common explanations include:

Disagreement is rarely random. Mapping its structure often reveals where real uncertainty lives.

A field with no visible disagreement is not mature. It is opaque.

Experienced researchers develop a sense for how claims age.

They notice patterns:

This temporal intuition is rarely written down, but it strongly influences judgment.

A synthesis that ignores time treats all evidence as equally current and equally informative. Senior researchers do not do this, even if they cannot always articulate why.

One of the most important habits senior researchers develop is explicit calibration.

Rather than treating claims as true or false, they assign them provisional confidence:

They also know what would change their minds.

Making confidence explicit does not weaken an argument. It strengthens it by revealing where uncertainty genuinely lies.

This is also where many literature reviews fail. They imply certainty without earning it.

A synthesis that only exists to justify a paper introduction is incomplete.

Senior researchers use their internal knowledge maps to:

Understanding that does not change behavior is not understanding. It is annotation.

AI meaningfully changes the mechanics of synthesis, but not its logic.

It can:

It cannot:

Used carefully, AI reduces clerical load and surfaces structure. Used carelessly, it produces polished confusion.

SciWeave is designed for the former use case: grounded synthesis anchored in citations, not abstract fluency.

The difference between junior and senior researchers is not intelligence or diligence.

It is comfort with uncertainty, selectivity with attention, and the willingness to say “we do not know” precisely rather than vaguely.

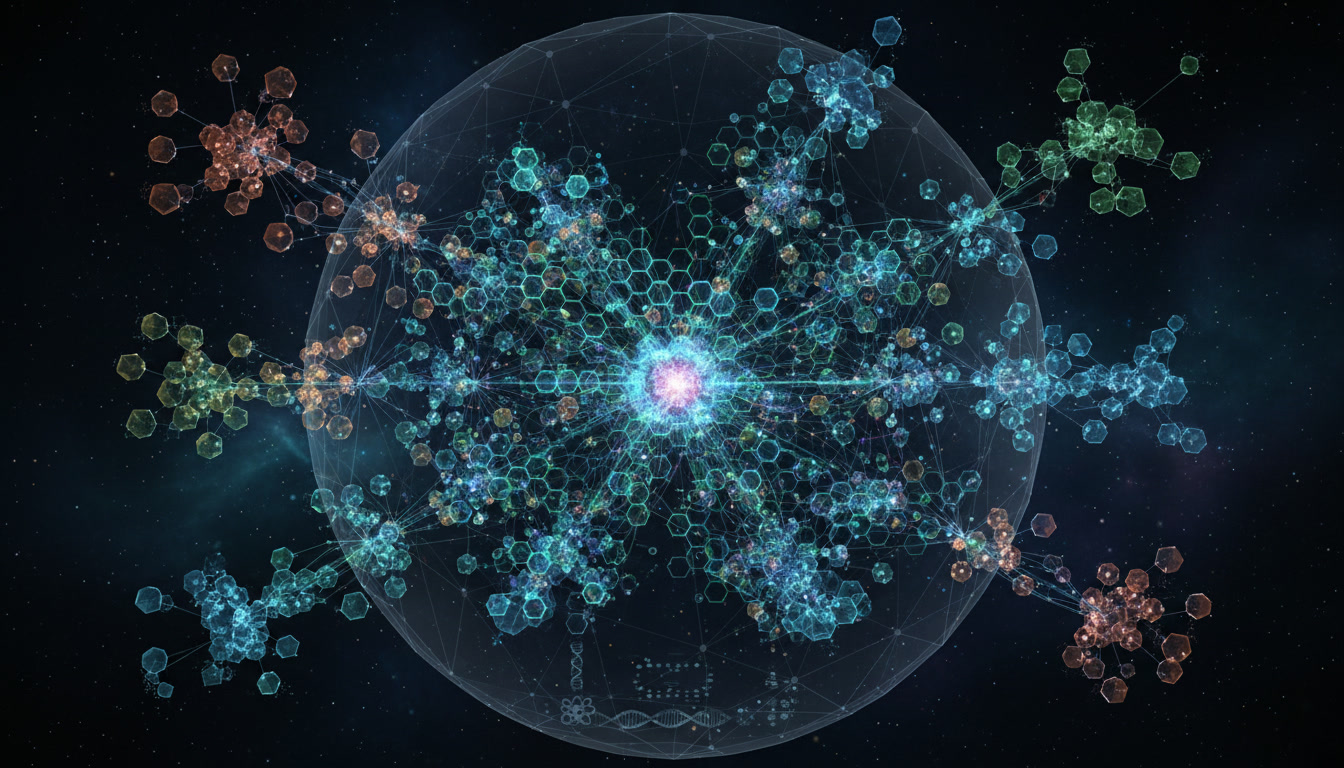

Moving from a literature review to a knowledge map is how that comfort becomes operational.

Have our latest blogs, stories, insights and resources straight to your inbox